|

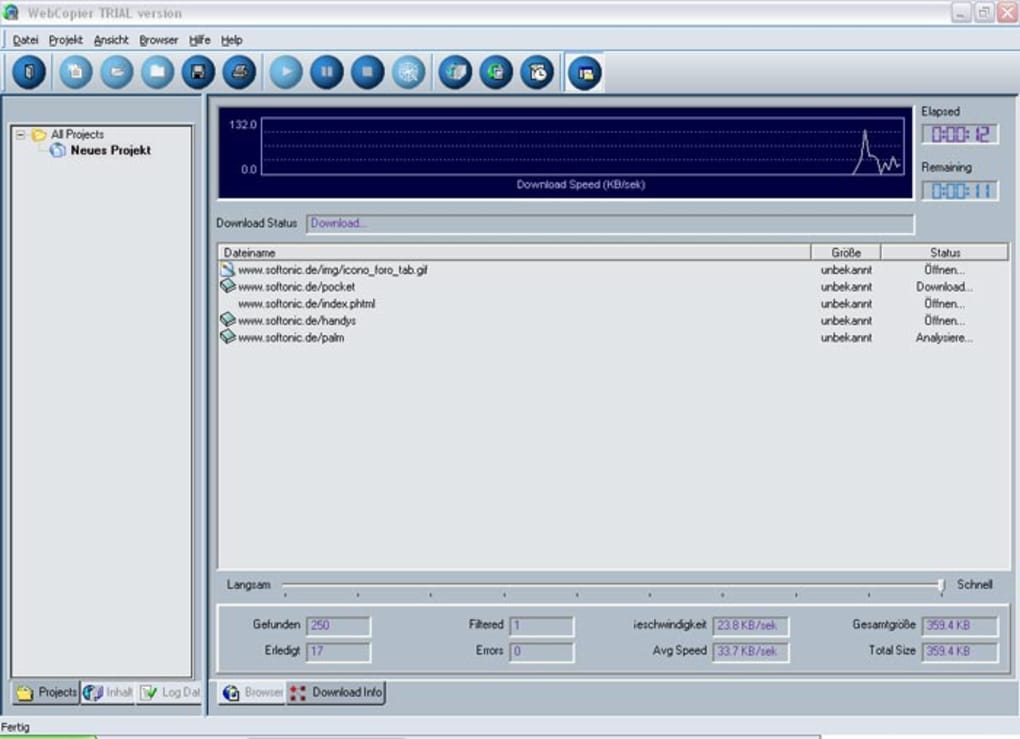

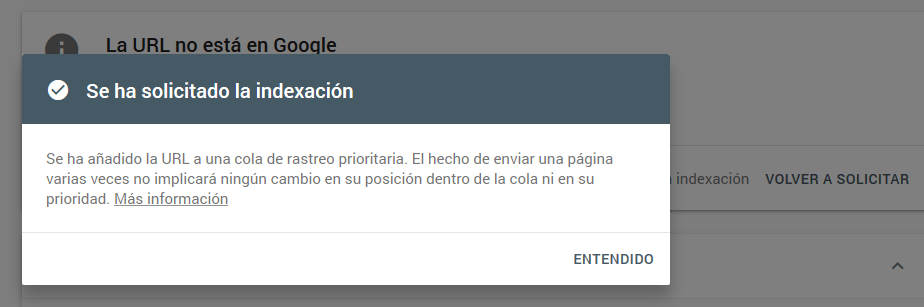

Removing /index.php?id=917218 since it should be rejected. Disallow: /index.php/admin/ Disallow: /index.php/comment/reply/ Disallow. I posted this up over at stackOverFlow but they turned me over here:) Hoping you guys can help.ĮDIT: Output of error message 16:54:47 (128 KB/s) - `/index.php?id=917218' saved User-agent: wget Disallow: / The grub distributed client has been very. I thought it would run through and travel in each link getting the files with the extension I have requested. When I open the webpage locally, FF gives me a popup box asking whether I want to open the php file of a page with gedit. Here's the man page of wget -O: Here's a few examples: wget with no flag wget Output: A file named as index.html wget with -O flag wget -O filename.html Output: A file named as filename. the php files are downloaded as php files. wget -convert-links -mirror -trust-server-names. Disallow: / User-agent: WebCopier Disallow: / User-agent: Fetch Disallow: / User-agent. I might also be missing exactly what "recursive" means in the context of wget. I want to download a website that uses php to generate its pages. You can easily find crawlers when you check the Webservers logfiles and look for many requests in short time from a single IP or subnet. Disallow: /index.phpdiff Disallow: /index.phpoldid Disallow. I guess I could always take a poke around the source although I don't know how messy the project is. Crawlers can also be used for automating maintenance tasks on a Web site, such as checking links or validating HTML code. I want to know how exactly it is trying to fetch the pages. Web crawlers are mainly used to create a copy of all the visited pages for later processing by a search engine that will index the downloaded pages to provide fast searches.

The problem however doesn't occur when using the very same wget command on that link. This usually occurs when the website link it is trying to fetch ends with a sql statement. It thinks the link is a downloadable file when in reality it should just be following it to get to the page that actually contains the files(or more links to follow) that I want.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed